Compute Resources

The LUHPCC maintains a 240 compute core Linux Cluster named Wesley that was installed in the summer of 2011.

The LUHPCC maintains a 240 compute core Linux Cluster named Wesley that was installed in the summer of 2011.

System Name: wesley.lakeheadu.ca

Operating System: CentOS 5.5

Number of Nodes: 20

Total Number of Processing Cores: 240

Number of Processing Cores Per Node: 12 Number of Fermi GPUs: 1

Number of Fermi GPUs: 1

Interconnection Type: 1 GB ethernet

RAM Per Node: 16 @ 48 GB, 4 @ 96 GB

RAM Per Core: 192 @ 4 GB, 48 @ 8 GB

Permanent Shared (NFS) Disk Space: 12 TB

Scratch Disk Space Per Node: 1 TB (2x500 GB in RAID 0)

Batch Facility/Scheduler: Torque and Maui

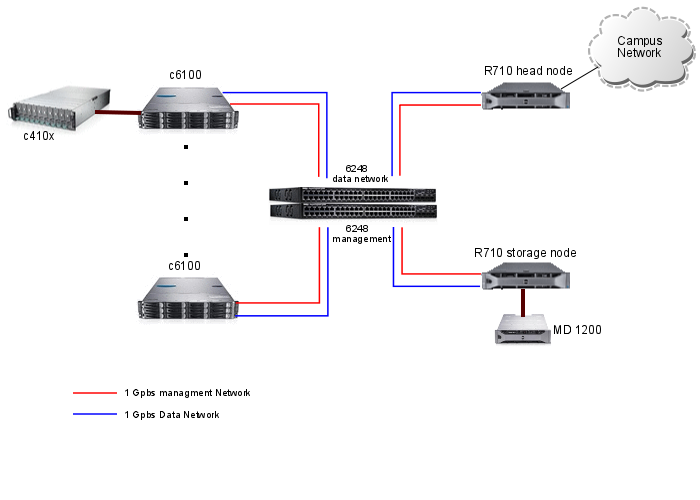

Wesley is composed of 20 compute nodes housed within five Dell PowerEdge C6100 series chassis (4 nodes/chassis), a PowerEdge R710 login/head node, and a PowerEdge R710 storage node with 12 TB of direct attached storage (DAS) in a PowerVault MD 1200. A PowerConnect 6248 Gigabit ethernet switch serves as an interconnection fabric between all of these components making wesley suitable for serial farming and low-bandwidth MPI cross-node calculations. A PowerEdge C410x PCIe Expansion Chassis provides General Purpose Graphics Processing Unit (GPGPU) capabilities. The C410x can hold up to 16 GPGPUs (currently a single Nvidia Tesla M2050 GPGPU is installed).

Each compute node contains two Intel Xeon X5650 hex-core processors @ 2.66 Ghz and two 500 GB SATA drives in a hardware RAID 0 configuration (for 1 TB capacity and higher throughput). Sixteen of the twenty nodes have 48 GB of RAM. The remaining four have 96 GB (wes-04-00 through wes-04-03).

The login and storage nodes contain two Intel Xeon X5650 hex-core processors @ 2.66 Ghz, mirrored 146 GB SAS operating system drives, and 24 GB of RAM.